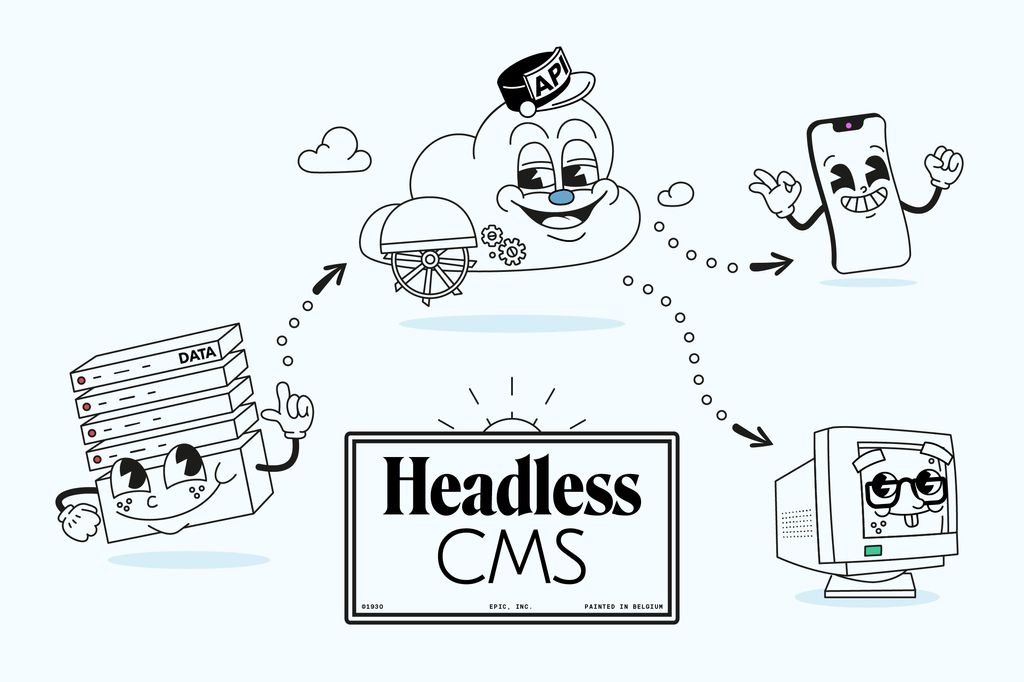

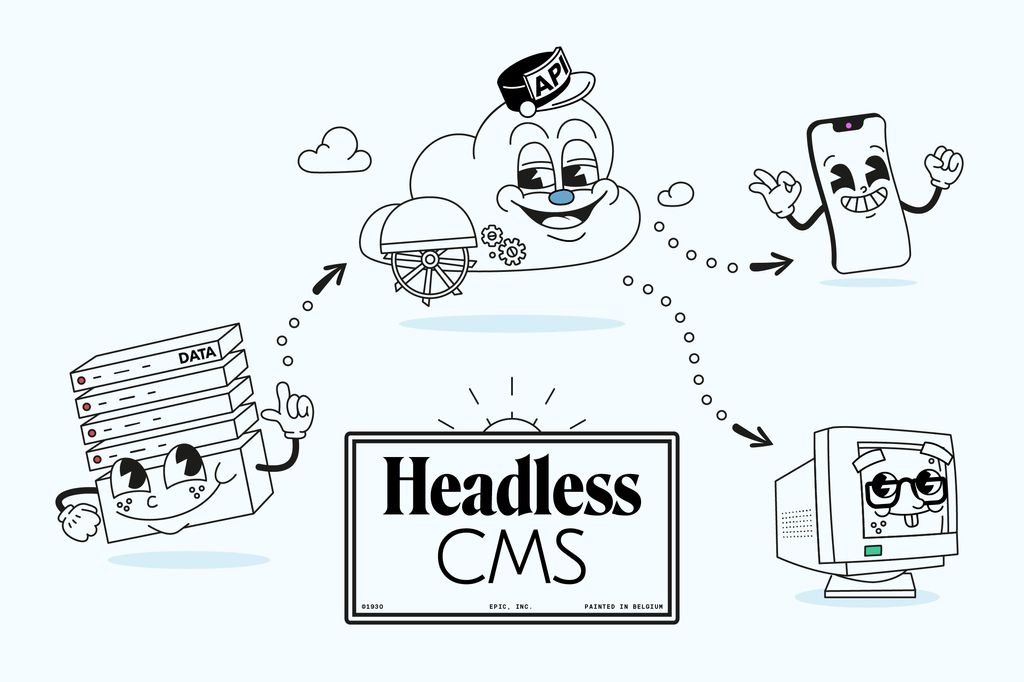

Headless & Wordpress

Understand our infrastructure

We developed a Facebook Instant Game for Red Bull, to promote their “AirDrop media campaign” for the worldwide launch of a new range of soft drinks.

This was an intensive and challenging project for which we had to get technically creative…so we thought we’d share our experience

The game we decided to build is an infinite “sky chaser” type of game. Basically, you are the pilot of a hot-air balloon that has to grab as many Organics(tm) cans as possible and drop them at specific spots to earn points. The gameplay is enhanced by adding several bonuses, special game mechanics and social features.

Following up are some of the techniques we’ve used for the development of this project.

We used Three.js for all the 3D scene and pixi.js for all the 2D, UI, game HUD and particles (made with PIXI particles).

With the most recent versions of both frameworks it’s really easy to share the same WebGL context and thus improving performance.

In many cases, overlaying HTML/CSS on top of canvas will result in performance drops due how the browser handles the compositing phase. We couldn’t afford anything of the sort, hence the decision to only use WebGL rendering while playing.

It’s important to note that there are no lights in the below scene. All the fake light is brought by a spherical reflection map using a matcap texture to improve the performance of the game even more.

const canvas = document.createElement('canvas');

const rendererThree = new THREE.WebGLRenderer({

canvas: canvas.element

});

const rendererPixi = new PIXI.WebGLRenderer({

view: canvas.element,

context: rendererThree.context

});

function loop() {

rendererThree.state.reset();

rendererThree.render(scene, camera);

rendererThree.state.reset();

rendererPixi.reset();

rendererPixi.render(stage, undefined, false);

rendererPixi.reset();

}The final color is obtained by multiplying the diffuse texture with the matcap lookup, creating a much more polished look.

vec3 r = reflect(normalize(vec3(vPos)), normal);

float m = 2.82842712474619 * sqrt(r.z+1.01);

vec3 matcapColor = texture2D(uMatcap, r.xy / m + .5).rgb;

vec3 matcapColor = texture2D(uMatcap, vN).rgb;

vec3 diffuseColor = texture2D(uDiffuse, uv).rgb;

vec3 color = diffuseColor * (matcapColor.r * 1.3);The game / rendering engine decoupling had worked rather well for our last development…and you know what they say about never changing a winning team.

So we applied to the same pattern for this game. This allowed us to test/validate the logic without worrying about the rendering (and vice versa).

The gameplay being a bit simpler this time (see UPS Delivery Day), we took some time to optimise the collision detection system a little.

The idea was to avoid using a library (collision, physics, …) and still end up with a light code in the end. As each object maps to a disc evolving on a 2D plan, the game didn’t require a huge amount of precision. A simple formula was therefore sufficient to detect collisions:

/**

* Calculate the distance between 2 points

*

* @param {object} p1 - First point

* @param {number} p1.x - First x coordinates

* @param {number} p1.y - First y coordinates

* @param {object} p2 - Second point

* @param {number} p2.x - Second x coordinates

* @param {number} p2.y - Second y coordinates

*

* @returns {number} - Distance between centers

*/

function calculateDistance(p1, p2) {

const dx = p1.x - p2.x;

const dy = p1.y - p2.y;

return Math.sqrt((dx * dx) + (dy * dy));

}

// Check if distance is smaller than the sum of the radiuses

calculateDistance(balloon, obj) < balloon.r + obj.r; // eslint-disable-line

The game engine thus manages the movements according to the same principle (this is possible only because there is no difference of altitude between the hot air balloon and the other objects).

With the help of two small functions (worldToGame and gameToWorld), we could easily go back and forth between both worlds…

/* eslint-disable no-undef, no-unused-vars */

class GameCamera extends component(OrthographicCamera) {

worldToGame(v3) {

this.updateMatrixWorld();

_v3.copy(v3);

_v3.project(this);

_v2.set(

(_v3.x + 1) / 2,

1 - ((_v3.y + 1) / 2)

);

return _v2;

}

gameToWorld(x, y) {

_v3.x = map(x, 0, 1, this.left, this.right);

_v3.y = 0.0;

_v3.z = map(y, 0, 1, -this.top, this.bottom);

return _v3;

}

}

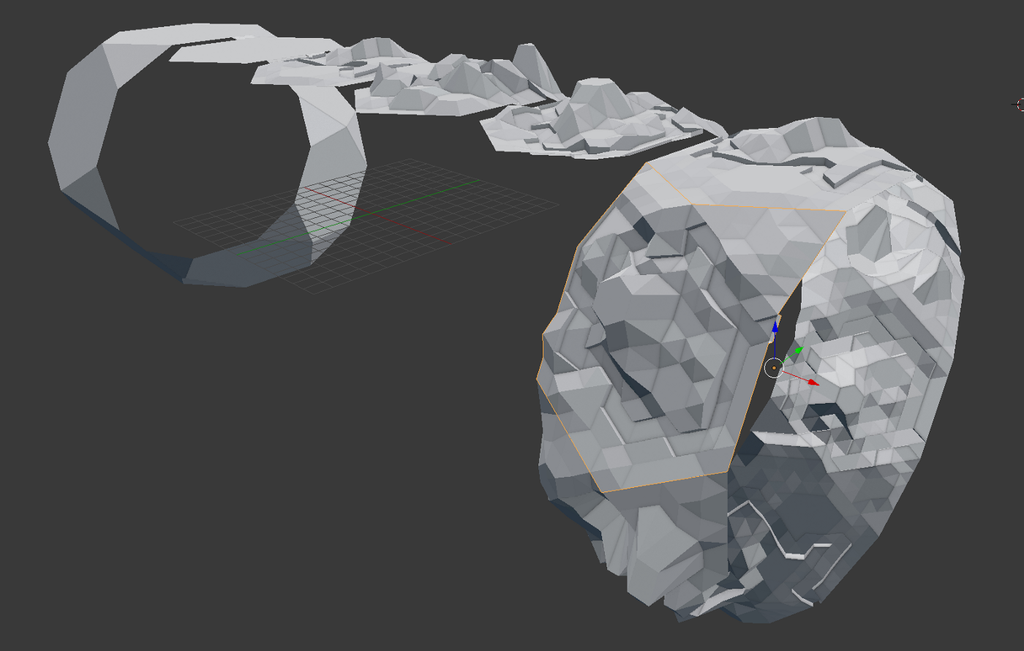

view rawgist-game-camera.js hosted with ❤ by GitHubWe wanted to go for a low-poly look and feel for this game; playful but modern looking enough for the game’s audience and RedBull’s tone of voice.

If you work with flat looking shadings in Blender, it automatically adds extra vertices to your mesh when exporting. Blender does that so that the normals are not “smoothed” when passed to the fragment shader.

To keep the file size to a minimum, we exported the assets with the settings set to “Smooth”, without normals. Doing so, our final assets consists in just two buffers: positions and UVs.

We then approximated the normals in our fragment shader using the WebGL extension OES_standard_derivatives.

Doing so, we were able to shave off around 70% on the 3D assets and reduce the total vertex count.

vec3 fdx = vec3( dFdx( vPos.x ), dFdx( vPos.y ), dFdx( vPos.z ) );

vec3 fdy = vec3( dFdy( vPos.x ), dFdy( vPos.y ), dFdy( vPos.z ) );

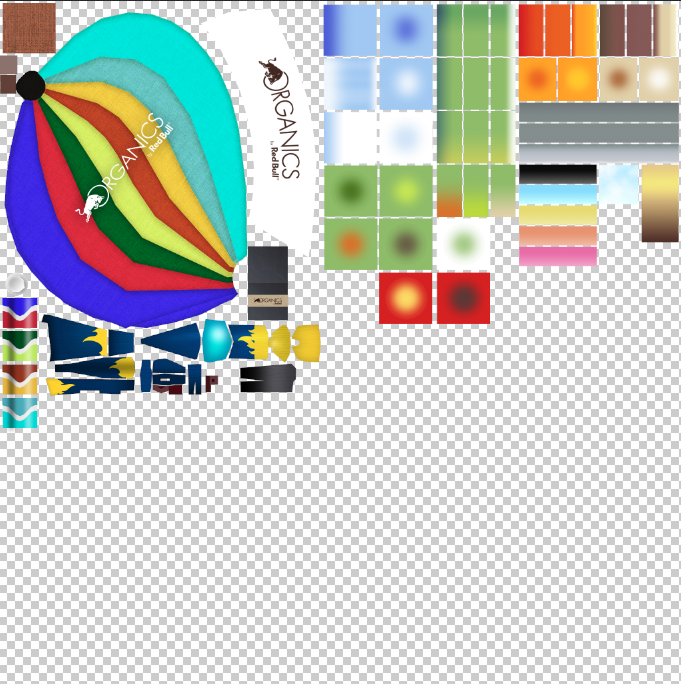

vec3 normal = normalize( cross( fdx, fdy ) );Facebook requires Instant games to load within 5 seconds, so it was crucial to keep the whole build size to a strict minimum. The main issue were the textures for the terrain.

Painting each terrain tile was not a viable option for us as it would have resulted in huge texture files. So we decided to use gradient squares instead, and to map the UVs on top of them.

This did not only allowed us to save in terms of texture size, but also some interesting scenarios. For example, if the UV coordinates are between a given range, they can be identified as water and we can animate them as such:

// Water

if (vUv.x > startX && vUv.x < endX && vUv.y > 1.0 - endY) {

float tt = uTime * 3.0;

uv += texture2D(uNoise, uv * (80.0 * uv.x + uv.y * 100.0 + tt * 0.1) + tt * 0.08).r * 0.028;

uv -= uv.x * 0.006;

}

To achieve the infinite globe looking effect we split our terrain into nine different tiles. We first tried modelling our tiles curved around a sphere, with the origin point at the center.

The idea was to have them rotating around the sphere. We soon realised the limitations of such method: first of all, it would have been quite complicated to model the tiles as such; and we would have ended up with a limited number of tiles.

We scratched that idea and decided to go for a different approach.

We modelled our tiles as flat items, then we “bent” them in the vertex shader. This simplified the 3D modelling, but also gave us the opportunity to fine-tune and adjust the bending of the tiles during the whole development process.

We used glslify to split our GLSL code into different files and functions. This allowed us to reuse the bending not only for the terrain, but also for the “flying objects”. That’s how they are able to follow the same curve perfectly.

// Bend a vertex so that there is an effect like "globe"

const float bendingStart = 150.0;

const float bendingEnd = 270.0;

const float bendingForce = 30.0;

vec3 bend(vec3 worldPos) {

float bending = smoothstep(bendingStart, bendingEnd, -worldPos.z);

bending = clamp(bending, 0.0, 1.0);

return vec3(

worldPos.x,

worldPos.y - bending * bendingForce,

worldPos.z - bending * bendingForce

);

}

#pragma glslify: export(bend)As soon as a tile goes behind the camera, it’s marked as free, and it’s eligible for being used again. In the final version we applied a slight fog, so that the user doesn’t see the tile immediately. To avoid too much “repetition”, we used a little trick: when a new tile is being added, there is a 50% chance that it will be flipped.

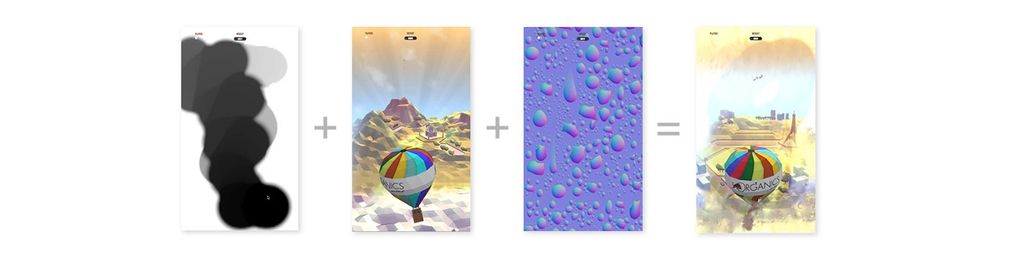

During the game, if you run into a “cloud”, your screen starts filling up with mist. You can either wait for it to disappear or clean it off by swiping furiously on your screen.

To achieve this feature, we generated a trail effect using an offscreen scene rendered into a frame buffer object. We then processed it the post processing pass together with some brightness adjustments, blur, a little noise and refraction using a normal map.

We built a custom post processing module using a triangle instead of the classic fullscreen-quad, which seems to bring some performance benefits.

The trail FBO is created as UnsignedByteType due the lack of OES_texture_float on Samsung devices.

Since we don’t need much precision the texture is relatively small (128px) with LinearFilter, so that is “smoothed out” when scaled and applied in our post processing.

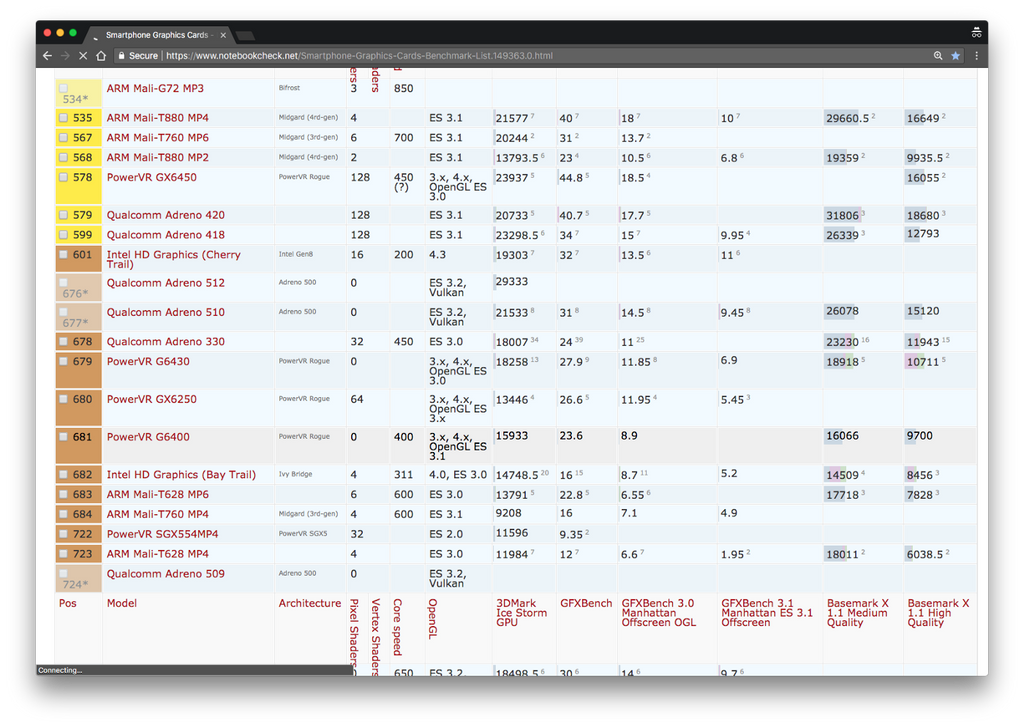

The minimum requirements for a Facebook Instant Game are:

Which means a very broad range of devices, from low to high end. It was crucial to have a smooth frame rate on all those devices while preserving good quality on high end devices.

We found some help to achieve that thanks to a very interesting WebGL extension called WEBGL_debug_renderer_info which gives debug information about the video card being used.

var canvas = document.createElement('canvas');

var gl = canvas.getContext('webgl');

var debug = gl.getExtension('WEBGL_debug_renderer_info');

gl.getParameter(debug.UNMASKED_RENDERER_WEBGL); // "NVIDIA GeForce GTX 1050 Ti OpenGL Engine"

view raw

Looking at those benchmarks, it looks clear that in most cases, between the same series/models, bigger the number, the better the GPU. Applying a series of RegExp on the chipset name we were able to compute a “score” of the user’s GPU.

In this case we applied the quality adaptation on mobile only, and our settings look something like this:

import device from 'render/device';

const settings = {

dpr: Math.min(1.5, window.devicePixelRatio || 1),

gyroscope: true,

fxaa: true,

shadow: true,

shadowSize: 1024,

};

if (device.isMobile) {

if (device.score <= 0) {

settings.dpr = Math.min(1.5, settings.dpr);

}

if (device.score < 6) {

settings.shadow = false;

}

if (device.score <= 10) {

settings.dpr = Math.min(1.8, settings.dpr);

settings.shadowSize = 512;

}

if (device.oldAdreno) {

settings.dpr = Math.min(1, settings.dpr);

settings.fxaa = false;

settings.shadow = false;

settings.gyroscope = false;

}

if (device.gpu.gpu === 'mali-450 mp') {

settings.dpr = 1;

settings.fxaa = false;

settings.gyroscope = false;

}

//...

}

export default settings;

This was part of the initial briefing: creating a game for Facebook’s Instant Game platform.

Great, something new!

We quickly understood that it’s all about using web technologies (HTML / JS / WebGL) and, even if we didn’t have access to the full documentation (early access), we are quite confident about the feasibility of it all.

We eventually got access to 100% of the documentation and to the “Instant Game Developer Community” private group (by far the one that makes the most noise in my timeline ?), and we started digging in: a little tic-tac-toe demo, interesting “guides” and a well documented SDK… we were ready to roll!

The onboarding was quite simple.. It’s all about asynchronous and wild promises! ?

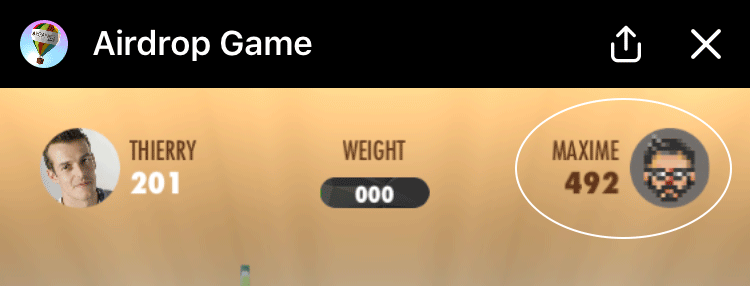

The biggest benefit for us was the easy management of the leaderboards. The possibility to add the score of the “next friend to shoot” directly in the interface via `getConnectedPlayerEntriesAsync()`, seemed like a very stimulating feature..

The management of data/stats by user also greatly simplified the implementation of “bonuses” and “gifts”.

Finally, the `payload` system via `getEntryPointData()` allowed us to easily integrate the behaviors related to the bot messages.

The fact that all sharing features are fully integrated and that the analytics dashboard is pretty complete is also noteworthy (but we didn’t expect anything less from Facebook) ?

Even if we ran across some limitations, it was a very good experience overall. Especially since we could count on the creative, strategic and technical support of a dedicated Facebook team. This gave rise to some epic conference calls between Austria, United Kingdom, Germany, Italy and… Belgium.

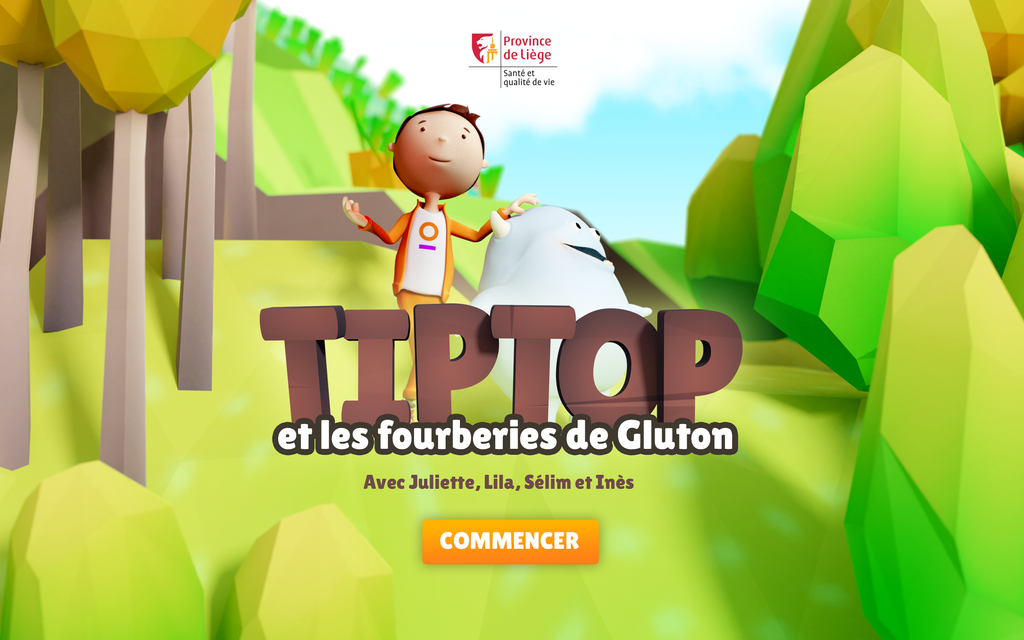

The province of Liège approached us to come up with a tablet app to tackle physical and mental health issues in children. The app will be deployed in all primary schools of the province.